How does Flathub even work? The CDN and caching layer

There is one specific way in which the non-corporate open source projects typically document how their infrastructure work: not at all, and Flathub is no different. The full picture likely lives only in my brain, and while it could be sorted out by anyone (especially in this LLM age, yay or nay), why should it only be me thinking at night about all the single points of failure?

Like any system that evolved naturally, it's all over the place. It's tempting to tell its history chronologically, but even then, it's difficult to find a good entry point. Instead, this post focuses on what happens when users call flatpak install; later entries will cover the website and, finally, the build infrastructure. Buckle up!

CDN, caching proxies, the master server

The secret of making computers work well is to have them not do anything at all, and that's the story behind serving Flathub's OSTree repository. Content-addressed objects are extremely cacheable as they are immutable, offloading the effort to the CDN provider.

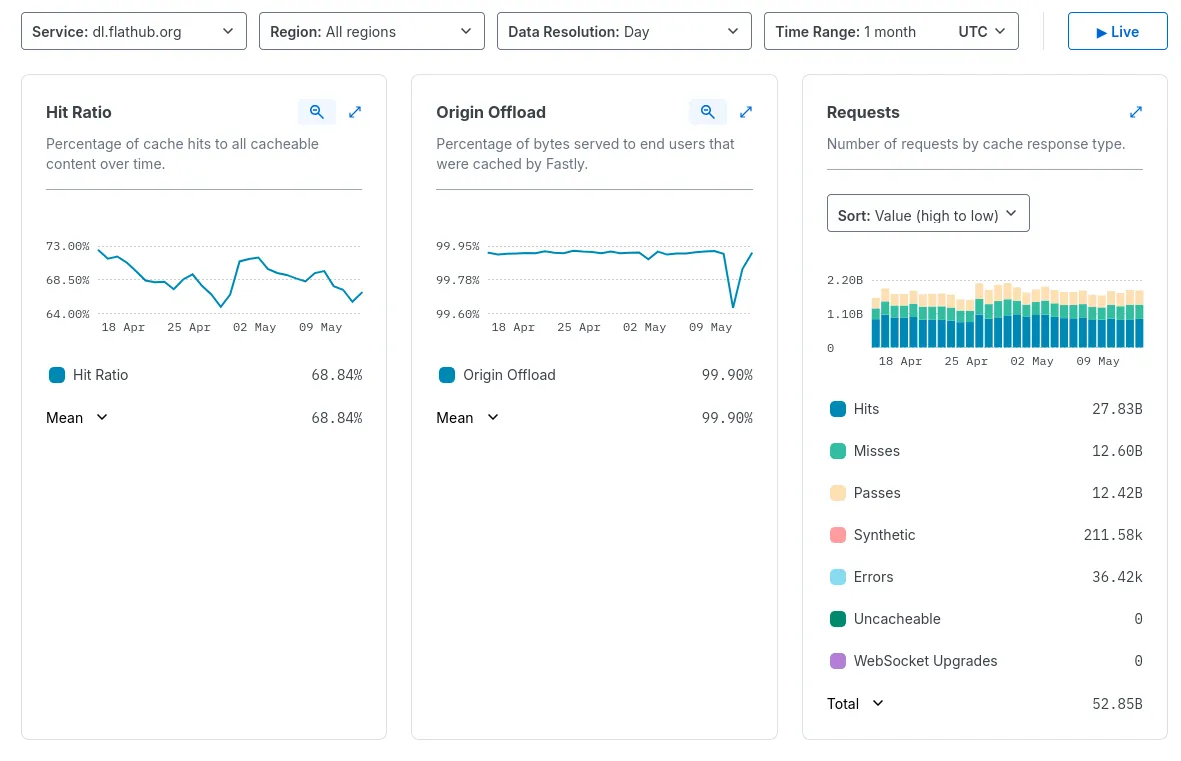

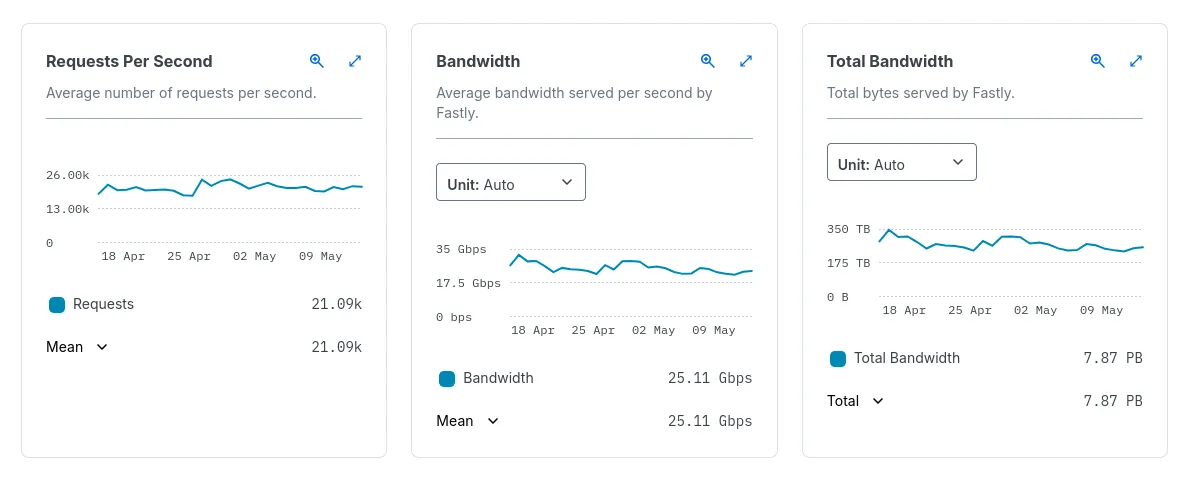

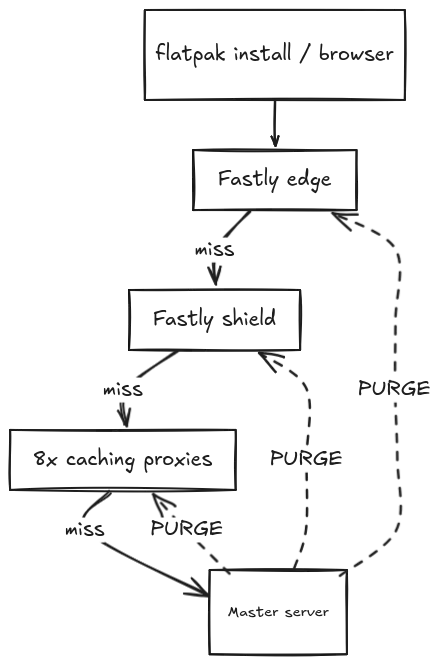

When the client connects to dl.flathub.org, you can be certain it hits some layer of cache. Almost all the heavy-lifting is done by Fastly. At the peak, when both EMEA and North America are awake and at computers, 50 million requests per hour are cache hits served by Fastly's infrastructure, with a modest 20 million being misses passed down to our servers. There would be no Flathub without Fastly; Fastly does it completely for free, not even for fake Internet points as we are incredibly bad at highlighting what our sponsors do for us.

You can't do enough cache, and so various Fastly servers talk to Fastly-managed shield server which caches the most requested objects to avoid spilling over too much to us. For legit cache misses, the request will be served by one of 8 caching proxies we are running at different VPS providers. We use a consistent hashing director at Fastly which will pick the backend based on the path being requested. In the past, we used a dumb round-robin but as a result, each caching proxy had its own independent copy of the working set, wasting disk space and producing a higher miss rate against the master server. Hashing by URL behaves like one big cache instead of N copies.

These days, the caching proxy fleet consists of 3 servers at Mythic Beasts, 2 servers at AWS, another 2 at NetCup and a single server at DigitalOcean. We don't collect overly detailed metrics, but on average, each proxy serves around 1 TB/month back to Fastly and pulls roughly 5 TB/month from origin. With only 100 GB of disk space per proxy against a multi-TB working set, we're not so much caching the long tail as smoothing it. In the ideal world, we would be retaining much more data at this layer, but it's not the world we live in.

Each of these servers is running the latest stable Debian release. The requests are served by the usual nginx setup with proxy_cache enabled. There is some custom Lua code for invalidating certain paths after publishing new builds finishes (spoilers!). Vanilla nginx doesn't support the PURGE method, and third-party modules like ngx_cache_purge have not seen any maintenance for over 10 years. In the end, it was more maintainable to write Lua code to calculate the caching key of a URL and then run os.remove to "purge" it from the cache.

There's also a systemd timer for refreshing the Fastly IP allowlist. We used to expose these servers publicly, but a vision of everything crumbling down due to a DDoS attack kept me awake at night so this had to change.

On the far end of this setup sits a lonely physical server living in one of the Mythic Beasts' datacenters. This is The Server holding the entire Flathub repo on an equivalent of RAID10 in ZFS world: two 2-disk mirror vdevs on which ZFS stripes data across. There is more nuance to this setup, but the ultimate advantage is that we can tolerate a disk failure in each of the mirrors, while being less taxing to resilver after a swap. The entire reachable data set is around 4TB of data, with the remaining 6TB unused. There will be more about the repository maintenance later on!

Ironically, it's the only server running Ubuntu. At the time, it was the easiest way to have support for ZFS readily available. We could re-provision it to Debian, but on the other hand, what for? It works fine that way. It has survived at least 2 major upgrades between LTS-es; if it ain't broke, don't fix it.

The master server itself has to be partially public as it's where new builds are being uploaded. It no longer exposes the raw Flathub repository for the same reason caching proxies don't. This is accomplished with Tailscale and a lightweight ACL config ensuring caching proxies can talk only to the HTTP server running on the main repo server and vice versa (for issuing PURGE requests). Yes, all involved parties have public IP addresses assigned so this could technically be pure WireGuard setup but I prefer to make this someone else's concern, especially given how generous Tailscale's free plan is.

It's not much, but it's honest work. For how little we have, the file-serving half of Flathub's infrastructure works unreasonably well. Stay tuned for part 2!